By John Gordon, in partnership with Uncertainty Experts

"We have a duty and obligation to be honest about what's coming." That is Dario Amodei, CEO of Anthropic. Kristalina Georgieva, Managing Director of the IMF, calls it a "tsunami." Kai-Fu Lee projects 50% of jobs displaced by 2027.

If those quotes tightened something in your chest, pay attention to that sensation. It is Fear. And it is entirely rational.

Article 2 mapped three technical pillars: Skills, MCP, and Markdown. Each connects to a behavioural state identified through Uncertainty Experts' research with UCL. This article takes the first of those states and pulls it apart, because Fear is doing more damage to your L&D function than AI ever will.

The data wall

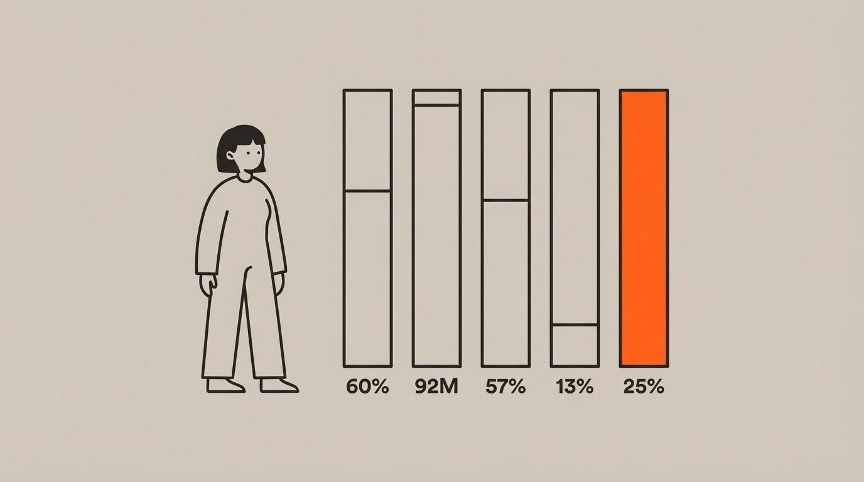

The numbers arrive from every direction. The IMF estimates 60% of jobs in advanced economies will be affected. The WEF projects 92 million roles displaced, offset by 170 million created. McKinsey found 57% of working hours automatable, with nearly a third of organisations expecting workforce reductions of 3% or more. Stanford researchers using ADP payroll data documented a 13% decline in entry-level hiring in AI-exposed roles. Benioff announced Salesforce would hire no more engineers.

Then the contrarian data: Yale Budget Lab found no significant displacement yet. Altman warned "AI washing is real."

Both positions are correct. AI washing is real. The transformation is also real. Nobody knows the exact pace. But every position on the spectrum agrees on one thing: skills are the differentiator. Not tools. Not strategy documents. Not AI policies. Skills.

That consensus should be reassuring. For most L&D teams, it is not.

What Fear actually looks like

Fear rarely presents as open anxiety. It presents as caution dressed up as governance.

"We need a policy before we can start." "The risk assessment has not been completed." "Legal hasn't signed off." Each statement sounds responsible. Each delays capability building by months. And each contains a thread of personal threat: if a machine can do parts of my job, and I am the one meant to be training others, what does that say about me?

PwC's Global AI Jobs Barometer reports a 25% wage premium for AI-skilled professionals. That number has a shadow side. Every month without structured capability building widens the gap in both directions. Professionals building skills now are pulling ahead. Professionals waiting are not standing still. They are falling behind at an accelerating rate.

PwC's Global CEO Survey found 56% of CEOs report no measurable value from AI investments. The NBER found 89% of firms report no productivity impact. Those numbers look like vindication for cautious teams. They are not. They describe organisations that bought tools without building capability. The tools did not fail. The approach failed.

Why the brain makes this worse

Uncertainty Experts' work with UCL provides a framework for understanding what happens inside the Fear response. The brain evolved core threat reactions: fight, flight, freeze, and fawn. None evolved for workplace uncertainty about emerging technology.

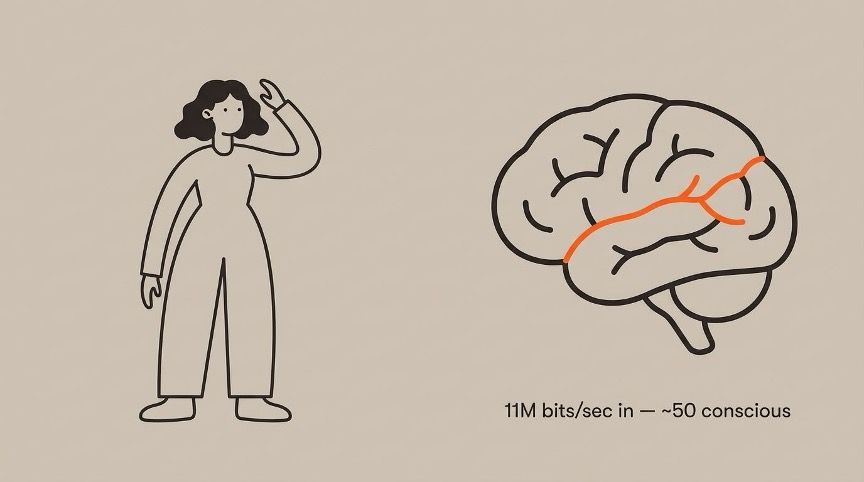

The body processes roughly 11 million bits of sensory data per second. Conscious thought handles roughly 50. The gap between what you feel and what you can articulate is enormous. An L&D professional may struggle to explain their resistance to an AI pilot. The resistance is real. The reasoning has not caught up with the sensation.

Research by de Berker et al. at UCL, published in Nature Communications, found that uncertainty produces more stress than certain pain. Read that again. People prefer known discomfort over uncertain waiting. This is why teams sometimes prefer a definitive "no" on AI to the ongoing uncertainty of "we should probably start."

HBR and UC Berkeley researchers found 62% of entry-level AI adopters report burnout. Fear is not just emotional. It has measurable workplace consequences.

What happens when L&D professionals build AI skills

The role does not disappear. It expands. An L&D professional with AI skills becomes an agent orchestrator: directing AI across four domains while applying judgement, organisational knowledge, and pedagogical expertise that AI does not have.

A needs analysis that took two days takes 20 minutes. A skills gap assessment across 200 roles, previously a six-week project, completes in a week. The ratio of strategic work to production work shifts dramatically.

Conviction Narrative Theory, developed by Johnson, Bilovich, and Tuckett at UCL, describes how imagined futures shape present decisions with the same emotional force as real memories. The team imagining failure is experiencing it emotionally, right now. Structured action replaces the imagined narrative with a real one.

Fear responds to three things: evidence, safety, and action. Show your team the full data, not just the alarming headlines. Reduce the cost of experimentation: one AI Skill Pack, one team, clear boundaries. Give people permission to try and permission to fail. The cost of a failed experiment is a few hours. The cost of six months of avoidance is thousands of hours of capability never built.

Start small and start now. One person, one skill, one task, this week. Article 4 looks at what happens when teams move past Fear but find themselves stuck in something subtler and more dangerous: Fog.

The Finer Vision AI Maturity Assessment is free and takes 10 minutes. It identifies which states are present in your team and gives you specific recommendations for what to do next.

Take the free AI Maturity Assessment at finervision.com/assessment

References

1. Amodei, D. (May 2025). Axios interview. "Duty and obligation to be honest."

2. Georgieva, K. (January 2026). WEF Davos speech. "Tsunami." 60% affected.

3. Lee, K-F. 50% job displacement by 2027.

4. World Economic Forum (January 2025). Future of Jobs Report 2025. 92M displaced, 170M created.

5. McKinsey (November 2025). State of AI survey. 57% automatable. Nearly a third expect 3%+ workforce reduction.

6. Brynjolfsson et al. (August 2025). Stanford / ADP payroll data. 13% entry-level hiring decline.

7. Benioff, M. (December 2024). 20VC podcast. No more engineers.

8. Yale Budget Lab (October 2025). No significant displacement yet.

9. Altman, S. (February 2026). India AI Impact Summit. "AI washing is real."

10. PwC / Burning Glass Institute. Global AI Jobs Barometer. 25% wage premium.

11. de Berker et al. (2016). "Computations of uncertainty mediate acute stress responses in humans." Nature Communications.

12. Zimmermann, M. (1989). Sensory processing: 11M bits/sec sensory, ~50 bits/sec conscious.

13. HBR / UC Berkeley (February 2026). "AI Doesn't Reduce Work." 62% entry-level burnout.

14. Johnson, H., Bilovich, A., Tuckett, D. (2022). Conviction Narrative Theory. Behavioral and Brain Sciences, Cambridge University Press.

15. PwC (January 2026). 29th Global CEO Survey. 56% of CEOs no measurable value from AI.

16. National Bureau of Economic Research (February 2026). "Firm Data on AI" (w34836). 89% report no productivity impact.

17. Uncertainty Experts / UCL. Fear, Fog, Stasis behavioural patterns.